The rise of generative AI has fundamentally changed how we create and consume written content. As language models become increasingly sophisticated, distinguishing between human and machine-generated text has become essential for educators, editors, and professionals across industries. Understanding what makes a sentence sound like ai-written requires examining specific linguistic patterns, structural choices, and stylistic markers that reveal a text's true origin. This knowledge empowers content validators to maintain authenticity and integrity in their work.

Repetitive Sentence Structure and Predictable Patterns

AI-generated text often falls into recognizable structural patterns that human writers naturally avoid. Machine learning models tend to favor certain sentence constructions repeatedly, creating a monotonous rhythm that becomes apparent across longer passages.

The Formula Behind AI Sentence Construction

Language models generate text by predicting the most statistically likely next word based on training data. This process inherently favors common patterns over creative variation. AI writing frequently exhibits:

Consistent sentence length throughout paragraphs

Overuse of transitional phrases like "moreover," "furthermore," and "additionally"

Repetitive opening structures across multiple sentences

Predictable subject-verb-object arrangements without stylistic variation

What makes a sentence sound like ai-written often stems from this statistical approach to word selection. Characteristics of AI-generated content include these repetitive patterns that emerge from probability-based text generation rather than intentional creative choices.

Measuring Structural Uniformity

Professional editors notice when sentence variety disappears. Human writers instinctively mix short, punchy statements with longer, complex constructions. They vary their paragraph lengths and adjust rhythm based on content importance.

AI models, conversely, maintain consistent paragraph sizing and sentence complexity throughout a document. This uniformity becomes particularly evident in longer pieces where human energy and attention naturally fluctuate.

Human Writing | AI-Generated Writing |

|---|---|

Variable sentence length (5-30+ words) | Consistent length (12-18 words) |

Natural rhythm changes | Mechanical consistency |

Strategic paragraph breaks | Uniform paragraph sizing |

Intentional repetition for emphasis | Unintentional repetition from probability |

Generic Language and Absence of Specificity

Another hallmark of machine-generated content is reliance on broad, non-specific language. AI models excel at producing grammatically correct, contextually appropriate text but struggle with precise, distinctive word choices.

The Vagueness Problem

When examining what makes a sentence sound like ai-written, specificity reveals significant differences. AI often produces statements like "many experts agree" or "recent studies show" without citing actual experts or studies. This vagueness serves a purpose: the model avoids making falsifiable claims it cannot verify.

Common generic phrases in AI text include:

"It's important to note that..."

"In today's digital landscape..."

"Various factors contribute to..."

"One must consider..."

"This approach offers numerous benefits..."

Human writers with subject matter expertise naturally include specific details, personal observations, and concrete examples. They reference particular dates, cite specific researchers, and draw from direct experience. Tools like the AI vocabulary finder can identify these telltale generic patterns across documents.

Missing Personal Voice and Authentic Experience

AI-generated text lacks genuine personal perspective. While models can mimic different tones, they cannot draw from actual lived experience or embed authentic personality into writing. The text remains technically proficient but emotionally hollow.

Human writing contains idiosyncrasies, unique metaphors, and personal linguistic fingerprints. These elements emerge unconsciously from individual communication styles, regional influences, and personal reading histories.

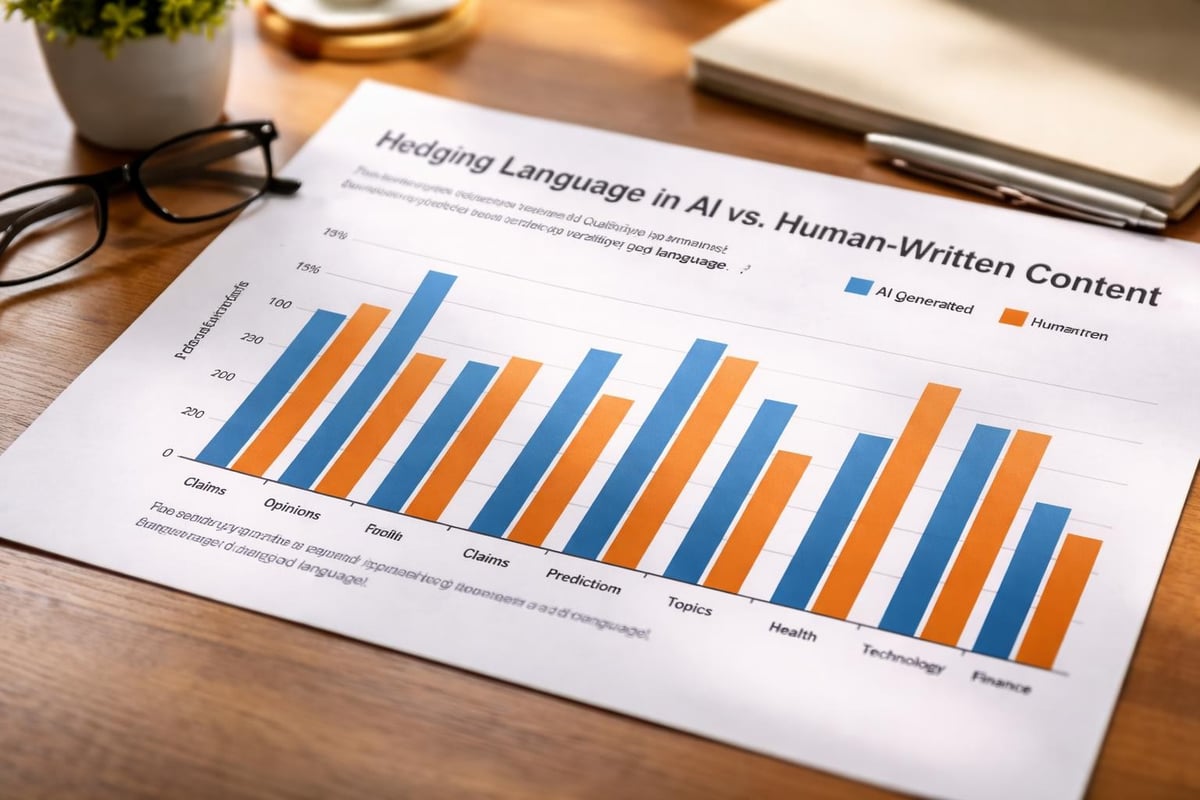

Excessive Hedging and Qualifier Overload

Language models demonstrate remarkable caution in their assertions, often filling sentences with unnecessary qualifiers and hedging language. This tendency reflects their training to avoid definitive statements that might be challenged.

The Over-Qualification Phenomenon

What makes a sentence sound like ai-written frequently involves excessive use of qualifying terms. AI models insert phrases like "arguably," "potentially," "typically," and "generally" far more often than necessary. This creates a tentative tone that undermines authority.

Consider these examples:

AI version: "This approach could potentially be considered somewhat effective in certain contexts."

Human version: "This approach works well for small teams."

The AI version uses four qualifiers where none are needed. Human writers, especially those with expertise, make confident statements backed by their knowledge and experience.

Balanced Certainty in Human Writing

Professional writers understand when to hedge and when to assert. They use qualifiers strategically for genuinely uncertain claims while making confident statements about established facts. AI lacks this nuanced judgment, applying caution uniformly across all content.

Unnatural Transition and Flow Issues

The way sentences connect reveals significant differences between human and machine authorship. Understanding how to spot AI-generated text requires examining logical flow and transition quality.

Forced Connectivity

AI models prioritize surface-level coherence, ensuring each sentence relates grammatically to the previous one. However, deeper logical progression often suffers. The text moves forward without building meaningful argumentative structure.

Transition problems in AI writing:

Overuse of basic connectors (however, therefore, thus)

Transitions that state the obvious

Missing conceptual bridges between complex ideas

Repetitive paragraph-to-paragraph linking patterns

Human writers develop thesis-driven arguments where each paragraph advances a central claim. AI-generated content often circles topics without progressing meaningfully.

The "List" Mentality

AI frequently structures information as implied lists, even in prose format. Rather than weaving ideas together organically, the model presents parallel points sequentially without synthesis. This creates what editors call "listification" where every paragraph exists independently rather than building cumulative understanding.

Vocabulary Anomalies and Word Choice Patterns

Specific vocabulary selections often reveal AI authorship. Language models favor certain words and phrases that appear with suspicious frequency across AI-generated content.

Statistical Word Preferences

Research into AI detection has identified vocabulary signatures associated with machine-generated text. Words like "delve," "navigate," "landscape," "robust," and "leverage" appear disproportionately in AI writing. What makes writing sound like AI often involves these statistically overrepresented terms.

Overused AI Terms | Human Alternatives |

|---|---|

Delve into | Examine, explore, investigate |

Navigate challenges | Face problems, overcome obstacles |

Digital landscape | Online environment, digital space |

Robust solution | Strong approach, effective method |

Leverage resources | Use tools, apply assets |

What makes a sentence sound like ai-written frequently involves these vocabulary choices appearing in combinations rarely used by human writers.

Register and Formality Inconsistencies

AI models sometimes struggle with maintaining appropriate register throughout a piece. They might shift between highly formal academic language and conversational tone without apparent reason. Human writers develop consistent voice aligned with their audience and purpose.

Emotional Flatness and Lack of Genuine Sentiment

Perhaps the most subtle indicator involves emotional authenticity. Characteristics of AI-generated text include the absence of genuine emotional resonance, even when discussing emotional topics.

Surface-Level Emotion Expression

AI can identify that certain topics warrant emotional language and insert appropriate words. However, the emotional expression remains performative rather than authentic. The text acknowledges feelings without conveying them.

Signs of artificial emotion:

Emotion words without supporting context

Generic emotional language ("very sad," "extremely happy")

Inappropriate emotional intensity for the topic

Consistent emotional tone regardless of content shifts

Human writers naturally modulate emotional expression based on personal investment and topic significance. Their emotional language connects to specific details and concrete experiences.

Missing Subtext and Implication

Human communication relies heavily on implication and subtext. Writers assume shared cultural knowledge and make references that require reader interpretation. AI tends toward explicit statement, avoiding ambiguity that might confuse or require cultural competency.

This explicitness makes AI writing feel pedagogical even in casual contexts. Everything gets spelled out rather than suggested or implied.

Contextual Awareness Problems

What makes a sentence sound like ai-written often involves subtle contextual mistakes that human writers rarely make. While AI models handle broad context well, they struggle with nuanced situational awareness.

Temporal and Cultural Disconnects

AI-generated content sometimes includes anachronistic references or culturally inconsistent elements. The model might reference outdated information as current or combine elements from incompatible contexts. How to spot AI-generated text involves watching for these contextual inconsistencies.

Human writers possess current awareness and cultural competency that prevents such errors. They understand temporal relevance and cultural appropriateness instinctively.

Domain-Specific Knowledge Gaps

AI models produce surface-level content across all topics but lack deep expertise. When discussing specialized subjects, the writing remains generic and avoids technical depth that would expose knowledge limitations. Experts immediately recognize this superficiality.

Technical Detection Approaches

Understanding linguistic markers helps, but systematic detection requires analytical tools. Professional detection platforms analyze multiple dimensions simultaneously to identify AI-generated content with high precision.

Multi-Factor Analysis Systems

Modern AI detection tools evaluate numerous factors concurrently. These systems examine:

Perplexity scores: Measuring text predictability

Burstiness analysis: Evaluating sentence length variation

Vocabulary distribution: Identifying statistical word preferences

Syntactic patterns: Detecting structural repetition

Semantic coherence: Assessing logical progression quality

What makes a sentence sound like ai-written becomes quantifiable through these analytical dimensions. How AI detectors work involves combining these metrics into comprehensive assessment models.

Integration with Plagiarism Detection

Comprehensive content validation requires examining both AI generation and plagiarism. Modern platforms integrate plagiarism checking with AI detection, providing complete content authenticity verification. This combined approach addresses the full spectrum of content integrity concerns.

Practical Implications for Content Professionals

Understanding these markers serves different stakeholders in distinct ways. Educators, editors, and content managers each apply this knowledge according to their specific needs.

Educational Applications

For educators, recognizing what makes a sentence sound like ai-written helps maintain academic integrity. Teachers using AI detection can identify when students submit machine-generated assignments rather than original work. This capability supports fair assessment and ensures students develop genuine writing skills.

Editorial and Publishing Contexts

Publishers and editors need to verify content authenticity before publication. Writers and content creators benefit from understanding these patterns to ensure their own work maintains human authenticity and passes detection systems when legitimately human-authored.

Professional Hiring and Recruitment

Organizations evaluating candidates through writing samples require confidence in content authenticity. AI detection for recruitment helps hiring managers verify that application materials genuinely represent candidate capabilities rather than machine-generated submissions.

Evolving Detection Challenges

AI writing capabilities continue improving, making detection increasingly complex. What makes a sentence sound like ai-written in 2026 differs from identifiers that worked effectively in 2023 or 2024.

Adaptive Model Development

Language models continually evolve, learning from detection methodologies and adapting to avoid identifiable patterns. This creates an ongoing cycle where detection methods must advance alongside generation capabilities. Research on detection robustness explores novel approaches maintaining effectiveness despite model improvements.

The Human-AI Collaboration Question

Many content creators now use AI as collaborative tools rather than complete replacement. This hybrid approach creates new detection challenges, as content blends human creativity with machine assistance. Distinguishing between AI-assisted and fully AI-generated content requires sophisticated analytical approaches.

Building Detection Literacy

Developing personal detection capabilities benefits anyone working with written content. While automated tools provide quantitative analysis, human judgment remains essential for comprehensive evaluation.

Training Recognition Skills

Regular exposure to both human and AI-generated content builds intuitive recognition abilities. Content professionals should:

Review confirmed AI samples to internalize patterns

Practice analyzing suspicious text for telltale markers

Compare high-quality human writing against AI equivalents

What makes a sentence sound like ai-written becomes recognizable through deliberate practice and pattern exposure.

Combining Human and Machine Detection

Optimal detection combines human expertise with algorithmic analysis. Human readers identify subtle contextual problems and tonal inconsistencies while automated systems measure statistical patterns and structural markers. Together, these approaches provide comprehensive content validation.

Recognizing the linguistic patterns and structural markers that distinguish AI-generated text from human writing is essential for maintaining content authenticity across professional, educational, and editorial contexts. By understanding what makes a sentence sound like ai-written through vocabulary patterns, structural uniformity, emotional flatness, and contextual inconsistencies, content validators can make informed authenticity assessments. Detector AI provides advanced detection capabilities that analyze these patterns with high precision, offering integrated plagiarism checking and source matching to support educators, editors, and professionals in maintaining content integrity across all their validation needs.